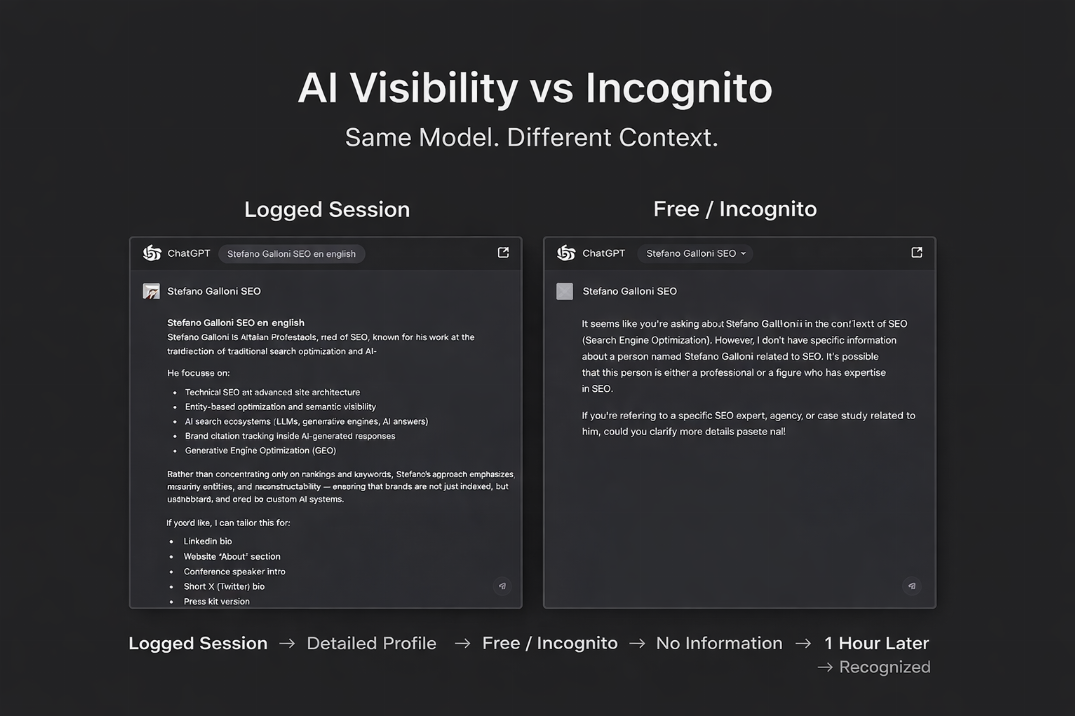

A real-world test comparing AI responses in logged-in vs incognito environments reveals how contextual memory, entity signals, and link equity influence visibility. Includes screenshots and methodological considerations.

AI Visibility vs. Incognito: What Happens When Context Disappears?

There's been a lot of discussion lately about how artificial intelligence is transforming search and the visibility of online content. Some SEO professionals argue that with the advent of advanced language models, traditional signals like backlinks are losing relevance. The underlying idea is simple: if models include entities and citations, then link building might no longer be as important.

I asked myself if this was really the case, not to criticize AI models, but to understand how stable and deep digital authority is today when evaluated by an AI-based engine.

What I tested

I ran a very simple experiment: I took the same name, used the same AI model, and asked the same question in two different conditions:

In a logged-in session, where the model had access to my usage history and context;

In a neutral/incognito session, with no previous memory.

The goal wasn't to test personalization per se, but to verify whether the authority perceived by the AI was context-independent.

Results: Logged Session

In the logged session, the AI returned a detailed and coherent profile: roles, areas of expertise, professional background. The output seemed almost constructed, as if the model had a sort of "extended memory" tied to my activity and the frequency with which I interact with that system.

In other words, in the context in which the model had already "seen" the model's name and usage, the response was rich in detail and advanced recognition cues.

Results: Incognito Session

In neutral mode, the responses varied. In some cases, the AI provided very little information; in others, it clearly stated that it didn't have enough data to build a profile. In some iterations of the test, a shorter version of the profile appeared, but it was still less complete than the logged session.

The variability itself is interesting: same model, same name, same question... but different results.

Why this happens?

There's no bug, no conspiracy. What this test shows us is that AI visibility is probabilistic and context-dependent. When the model has memories linked to an account or previous conversations, the result tends to be richer and more detailed. When the context is removed, the response relies on public signals available online.

This highlights an important distinction:

Session context and personalized memory → enhance the response;

Public signals and structured data on the web → are what remains when the context disappears.

Variables that Affect Results

Before jumping to conclusions, it's crucial to understand that the results of such a test can change for various reasons, including:

1. Continuous Model Updates

AI models are frequently updated; even small changes can affect how entities and profiles are recognized.

2. Internal Confidence Thresholds

Models decide how "confident" they are before generating a detailed response. If confidence is low, the model holds back.

3. Differences in Question Wording

Slightly different phrases can trigger different internal search mechanisms within the model.

4. Language Used

The same query in Italian, English, or Spanish can yield different results.

5. Name Collisions or Ambiguities

Common names or names shared by multiple public figures can bias the model toward more well-known or established profiles.

6. Environment (Free vs. Pro)

Free and paid plans don't always use identical processing pipelines, nor the same contextual storage architecture.

These variables aren't errors—they're part of how neural systems approximate knowledge.

What's Left When Context Goes Away

The key point is this: if an entry disappears or drastically weakens when we remove context, then it's not yet firmly rooted in the model's "public knowledge." And what contributes to that public knowledge? Typically signals like:

Consistent backlinks

Authoritative public mentions

Well-referenced structured data

History and breadth of online profile

In other words: link building and other traditional SEO signals haven't disappeared. They've evolved, and continue to contribute to the foundation on which models learn.

What changes over time: model variability

About an hour after the first test, I repeated the same incognito search and—without changing anything—the system finally provided a consistent profile even in the free version. This doesn't indicate a "fixed bug," but it does show that:

models can update recognition priorities internally;

the availability of public signals can be evaluated differently on subsequent requests;

continuously evolving systems don't always provide identical answers at close intervals.

This behavior should be well understood by SEO professionals, as it implies that there isn't yet a single "point of truth" for AI visibility.

We don't have a "perfect" model of AI visibility yet.

Link building isn't dead, and public signals continue to play a fundamental role in the models' knowledge base.

What we see today is a transitional phase:

AIs rapidly amplify what is passed to them—but what they can pass still depends, to a large extent, on what is structured, connected, and reinforced on the public web.

As SEO professionals, what matters is not just being "recognized by a logged-in model," but ensuring that our authority signals are stable and persistent, able to emerge even when the context disappears.